Operation of a modern datacentre represents a wide range of requirements and challenges. The ideal method to tackle those challenges is the automation of critical processes using Unipi Axon programmable logic controllers.

Introduction

Datacentres are among integral parts of modern information services and their reliable and effective operation is the most basic requirement to earn and keep the trust of their clients. For their money, the datacentre customers are purchasing the certainty that their data are safe, always accessible and protected against any downtimes, damage or losses. Datacentre operators are thus obligated to maintain the reliability and to be able to deal with any issues without compromising the continuous operation of the datacentre.

Cooling and power supply represents the most critical area of datacentre operation. Especially higher-tiered datacentres thus feature dual-circuit systems for both cooling and power supply with both branches independent on each other (eg. failure of one will not affect the other in any way). Highly complex cooling systems, containing a wide range of various components (compressors, pumps, cooling fans, servos etc.) require the ability to monitor all its parts and to respond to any issues as fast as possible, be it a local coolant leak or extensive failures resulting in server overheating. The power supply must then possess a sufficient redundancy to be able to keep the cooling operational even in cases when the external power supply is cut off. For this reason, datacentres are usually equipped with backup diesel generators and UPS stations.

Recently, the aspect of ecology has been also rising in importance. Datacentre operations are characteristic by high power consumption; that said, an eco-friendly datacentre should be able to lower its power draw through optimizing the power distribution. A great example of this approach is the free-cooling method that uses cold air during winters for reducing the coolant temperature, allowing operators to switch compressors off and save energy. Datacentres also generate substantial amounts of waste heat that can be recycled by using it for heating or hot water distribution. Such methods can significantly lower running costs and allow the datacentre owner to lower the fees, making the datacentre more attractive to customers.

However, without any suitable control system building a datacentre featuring all the above-mentioned features would be an extremely difficult task. Such a system should not only automate all critical systems but should also respond automatically to any emergency without the need of human input while allowing its operators to monitor a maximum possible amount of operational data to detect any errors or malfunctions in time. A proper control system can contribute to prolonging the lifespan of all components and can be used for time-effective planning of maintenance periods.

Description of a brand-new datacentre

A customer from the Czech Republic decided to expand its services by building a brand-new datacentre featuring 96 racks and a high-tech control system to achieve a 100% redundancy of all key components (cooling, power supply etc.) while stressing the eco-friendliness of the datacentre. To achieve these goals the power distribution system is divided into two independent branches with each branch having its transformer station and power lines. If the external power is cut off, each branch then features its own UPS backup source as well as one 750kVA diesel generator for each branch. Part of the power demand is met with a solar panel array on the roof of the datacentre building, providing a maximum power output of 30 kW.

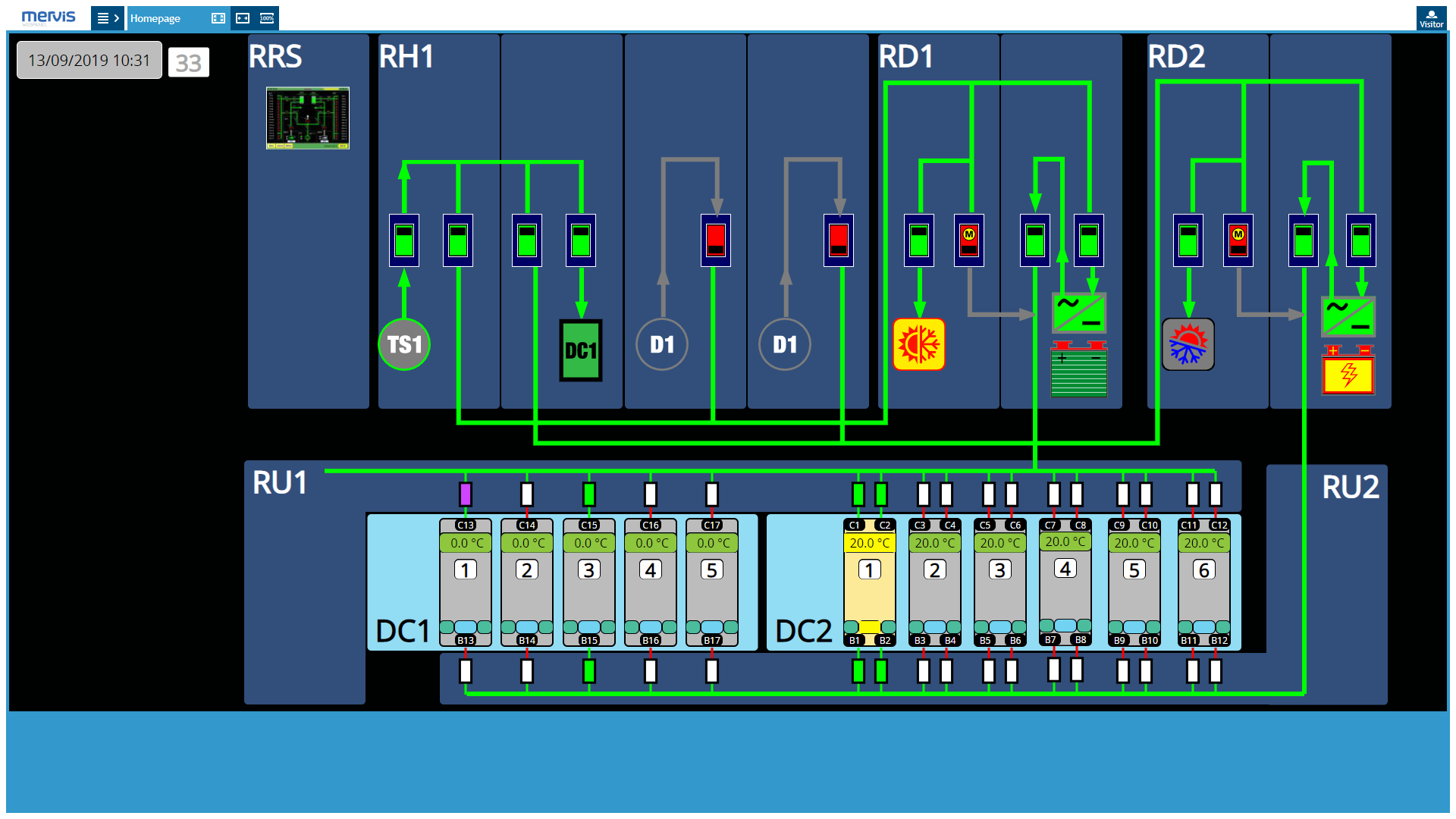

A general power distribution screen. This interface allows operators to monitor the power distribution from the transformer station to the individual server rooms.

A general power distribution screen. This interface allows operators to monitor the power distribution from the transformer station to the individual server rooms.

The cooling system also consists of two independent cooling AC lines with a power output of 500 kW each. Both lines are interconnected and their combined output can be focused into a single cooling branch if needed. The system also includes the free-cooling feature; if the outside air's temperature decreases below 8°C, datacentre operators can power down the cooling compressors and route the heated coolant directly into the roof fans in which the coolant transfers the heat to the outside air.

Circuit breaker management interface

Circuit breaker management interface

Cooling of the individual server rooms is solved by a standard hot aisle/cold aisle method. Cold air propelled by a pair of cooling fans (one for each cooling branch) is blown into the middle of the server room (cold aisle), from which the air is sucked in by individual servers. Heated air is then expulsed from the servers by their cooling fans into a pair of hot aisles running parallel on each side of the server room. From hot aisles, the hot air is then sucked in by the cooling system and the process repeats itself. To ensure a proper airflow the cold aisle is separated from hot aisles by polycarbonate walls.

Focus on eco-friendliness is realized not only by re-using the waste heat (see below) but also by using the Argonite eco-friendly extinguishing agent ( a 50/50 mix of argon and nitrogen) and coolant leak protection. When choosing the hardware used, the main criteria are low power consumption, long lifespan and economic operation.

Extensive datacentre automation

For automation of the above-mentioned systems, the customer used a Unipi.technology-designed control system based on multiple programmable logic controllers installed throughout the facility. The particular models used are Unipi Axon M515, S215 and L505 controllers complemented by Unipi Extension xS10 and xS30 extension modules. Controllers are installed into several nodes with each node fulfilling a specific task.

Control and monitoring of diesel generators

The Axon L505 master controllers monitor the operational status of both diesel generators and their operational data such as oil temperature, battery voltage, RPM, fuel amount etc. The time remaining until the next maintenance check is also tracked along with uptime timer and other parameters. Generators feature their controllers which communicate with Axon controllers via RS485 interface (Modbus RTU) in parallel with Ethernet (Modbus TCP).

Diesel generator control interface.

Diesel generator control interface.

Cooling circuit control

Each cooling circuit is controlled by Unipi Axon M515 controller complemented by a single Unipi Extension xS10 or xS30 module. The controller reads data from a suite of flow meters, thermometers and other sensors, using this data to regulate the coolant flow through a system of remote-controlled valves while maintaining a proportionate control of cooling fans output to achieve optimal cooling performance. In case of the controller's failure operators then have the option to control both cooling circuits manually via physical switches.

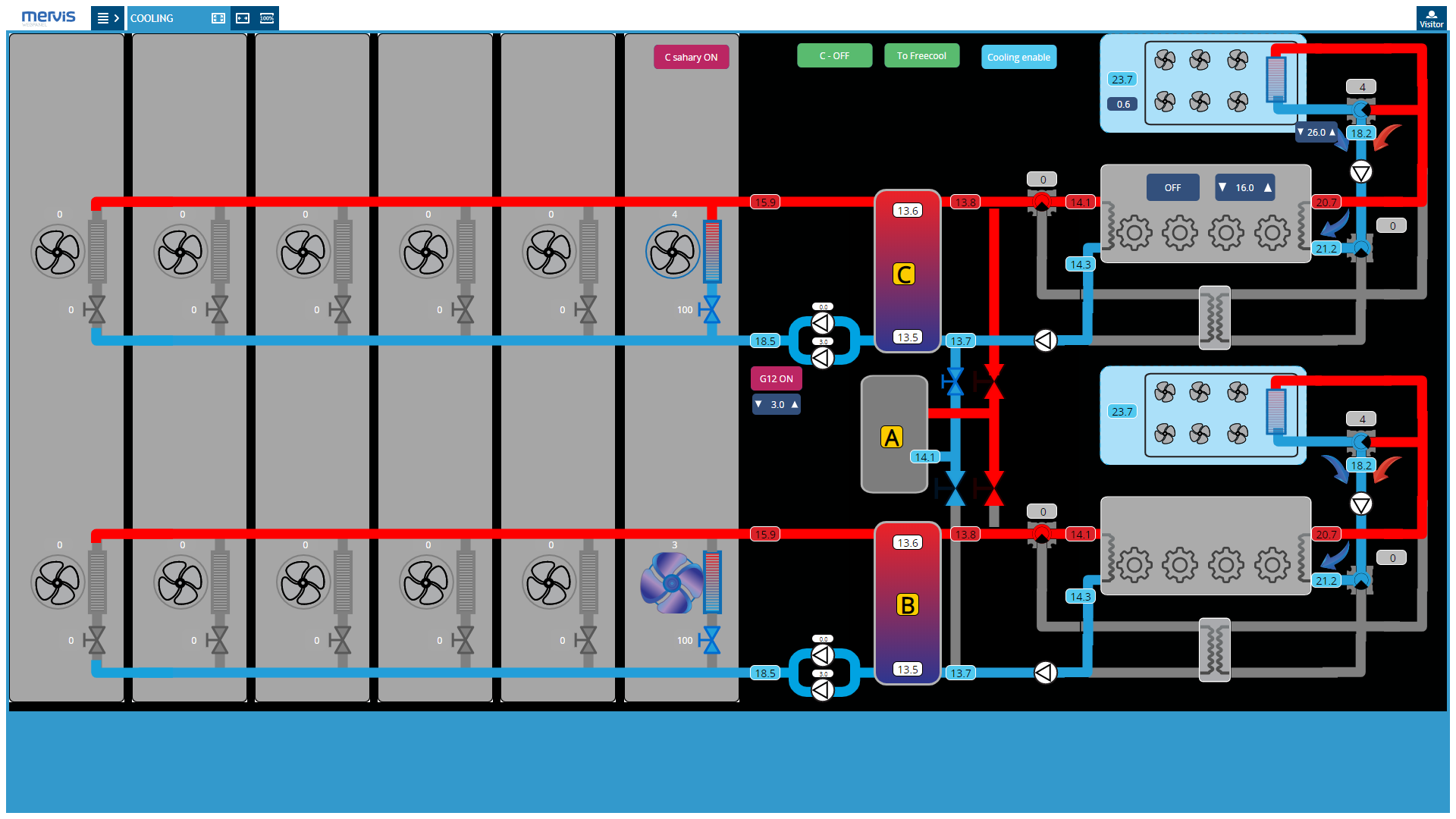

Main cooling control screen. Displayed are temperature levels in individual server rooms, lighting status, door status, the status of cooling fans and the position of control valves.

Main cooling control screen. Displayed are temperature levels in individual server rooms, lighting status, door status, the status of cooling fans and the position of control valves.

Access system

For safety reasons, the ability to track and monitor personnel access is crucial. The datacentre uses personal RFID chips issued to both employees and clients and used to unlock doors via RFID chip readers. The access system is operated as a secondary feature of cooling system controllers; data from RFID readers are transferred via the RS485 interface into the Axon M515 controllers which verify the access data and then unlock the given doors by switching their locks through relay outputs. Access logs are stored by the controller and allow for monitoring the personnel access and movement in the datacentre.

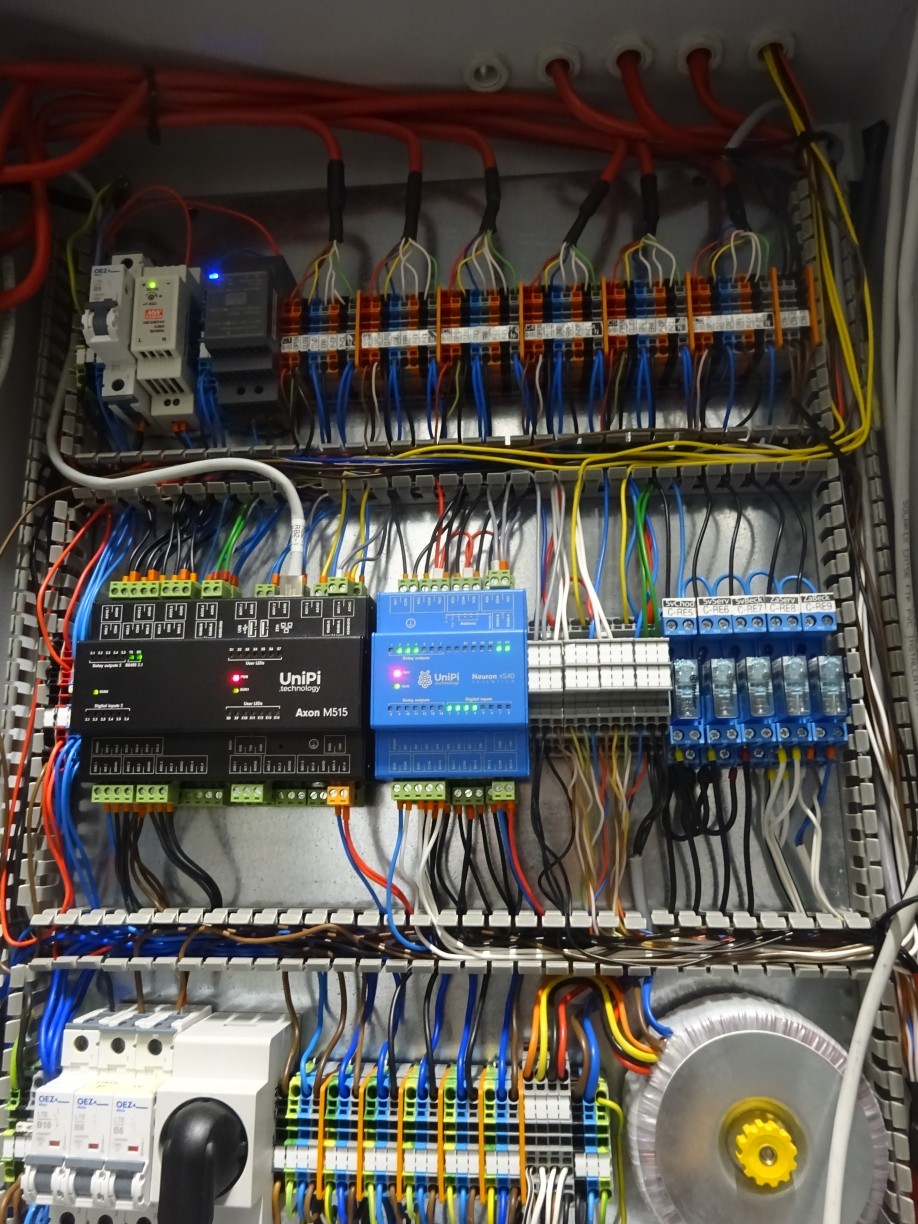

A distribution box with the Axon M515 PLC and the xS10 extension module

A distribution box with the Axon M515 PLC and the xS10 extension module

Differential pressure measurement

Differential pressure sensors are placed in individual server rooms, measuring the difference between the air pressure in cold and hot aisles. The controller then processes the difference and sends the differential pressure value to the supervision centre. This value is valuable mainly for monitoring and maintaining a slight overpressure in the cold aisle that ensures sufficient airflow in the desired direction. Without it the server cooling fans would have to increase their power output, decreasing their lifespans considerably. Differential pressure measurements thus increase the server lifespan and improve their reliability.

Waste heat re-use

The cooling system produces large amounts of waste heat as a byproduct. The control system utilizes this heat for both water heating and heating of individual rooms in the datacentre's building. Using a suite of 1-Wire thermometers located in individual rooms the system automatically maintains optimal temperature, avoiding any overheating or underheating.

Precise invoicing & billing

Each server room has one distribution box containing a single Unipi Axon S215 controller. The PLC reads data from S0-equipped energy meters and sends them to the supervision centre for invoicing. Customers are then billed only for the energy that was consumed by their rented servers.

A distribution box with the Axon S215 PLC and energy meter displays.

A distribution box with the Axon S215 PLC and energy meter displays.

Valve position monitoring

In the cooling compressor room, a pair of Unipi Extension xS10 extension modules (one for each cooling circuit) is installed, monitoring the position of coolant flow valves and the flow direction. Master controllers connected to the extensions then relay these data to the supervision centre, allowing its operators to maintain constant awareness of the position of individual flow valve and to react to any potential changes of the valve settings.

xS10 extension modules

xS10 extension modules

Supervision

All controller nodes are monitored by a central distribution box containing a pair of large Axon L505 controllers that process supervision centre's command and relay them to the individual nodes through a system of relays, communication interfaces or various inputs. All collected data is then routed to a modern supervision centre crewed by operators that monitor the datacentre's operation and enter control commands if needed. Master controllers also monitor each other; if one of them fails the other automatically takes over its role. Thanks to a decentralised architecture even a complete failure of both master controller would not affect the node controllers.

The central distribution box featuring a pair of Axon L505 PLCs

The central distribution box featuring a pair of Axon L505 PLCs

Redundancy and high reliability

Axon controllers are based on an industrial computer with quad-core ARM CPU, 1GB RAM and 8GB eMMC internal memory, giving them a high computing power and very short response time. All components are encased within a durable aluminium chassis providing IP20 protection which also functions as a passive cooler. Thanks to this feature the Axon controllers can offer an extended operating temperature range (up to +70°C), rugged construction and resilience towards external hazards. Each input/output circuit board features its microprocessor; if the computing module fails these processors maintain the basic functionality of the given I/O board and prevents failure of the entire controlled process. On the whole infrastructure level, the control of cooling and power distribution is divided between multiple Axon controllers. Due to this architecture failure of a single controller will not affect other parts of the system.

Software solution

The entire remote management solution is based on the Mervis platform, the officially supported SW solution for Unipi controllers. Mervis is supplied with each Axon controller as a pre-installed software and is characteristic by its combination of professional programming tools under the IEC 61131-3 PLC programming standard, and a user-friendly interface. The software also contains an online cloud database, integrated HMI editor and SCADA interface. The HMI editor was used to create the entire datacentre control interface through which the supervision centre crew can monitor all necessary data of cooling circuits, diesel generators, server rooms, circuit breakers and others. In case of emergency, the system automatically sends alerts to the supervision centre. If, for example, a circuit breaker fails or the diesel generator is running short on fuel, operators will get an interface notification along with an e-mail message.